Overview

Looking for integration of voice app assistants into your App?

Artificial intelligence has played a great role in improving interaction of devices with mankind. This can be attested by various developments such as voice recognition, control of machines among others. There is rapid growth of virtual assistants in the entire ecosystem. Our team is always at its best to cope up with these developments in order to provide quality and optimal solutions. We are able to integrate voice assistants such as Siri and Cortana into an App.

How to include a voice assistant in a mobile App

Your app needs the following three ways to understand your verbal language and be able to hold a conversation:

Each and every method is very crucial in realizing a voice assistant app. Apple and Google are very reluctant in offering their voice assistants to third-party developers. In addition, the existing voice assistants may not meet all of your expectations. Each method is associated with various benefits and risks. Below is a deep look at all approaches.

3 surefire solutions

Using external open source solutions

Melissa

Jasper

Api.ai

Wit.ai

Independent

STT

TTS

Intelligent tagging

Noise control

Voice Biometrics

Speech compression

Voice interface

The best virtual assistants and how they are integrated into an app

The mostly used assistant apps are Siri, Cortana and Google Now.

Below are more details regarding these three voice assistants and why they are preferred by majority of users.

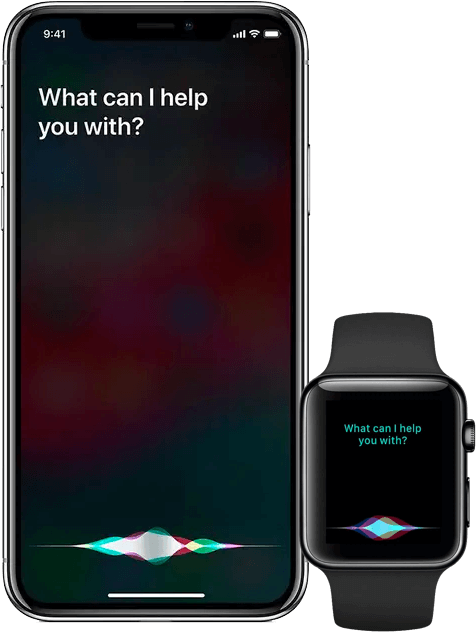

iOS Applications

Hi Siri!

This integration is made possible by SiriSDK which consists of two frameworks. The first one is involved in coverage of the tasks supported in the app, while the second one provides advice on custom visual representation when one of the task is performed.

Siri comes with a variety of intents which have customized classes possessing defined properties. These properties describe the task they belong to in an accurate manner. More details on how Siri works can be found on Apple’s website.

After iOS 10 release, Siri can now be integrated with apps that work in the following areas:

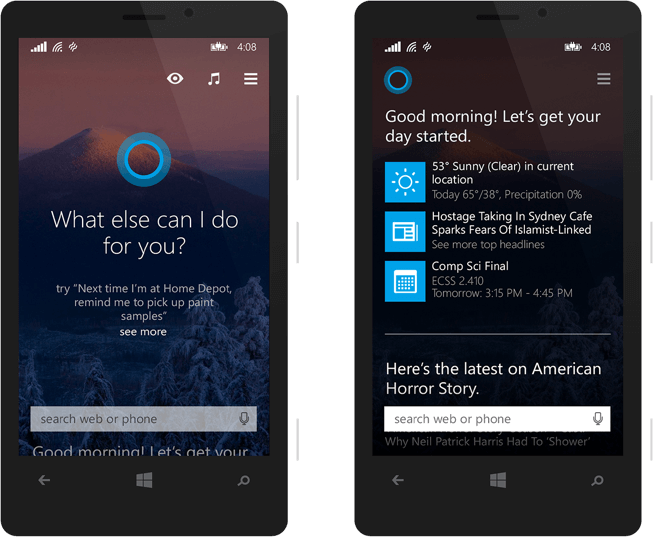

Windows Application

Hi Cortana!

You can activate either the background or foreground app with speech commands through Cortana. The former is suitable for simple apps which do not require additional instructions while the latter is for apps which works with more complex commands.

This is a product by Microsoft which provides users with an opportunity to set a voice control without necessarily calling directly to Cortana. This involves the following basic ways:

AMAZON ALEXA APPLICATION

Hey Alexa!

“Alexa, book a taxi for me.”- Alexa will open up the respective taxi booking application and order a taxi for your providing ultimate conversational experience. It is that simple. With dominance of Amazon Echo and Echo Dot smart speakers usage globally, we insist our clients to go for custom Alexa skill development. Our Alexa skill developers are proficient in programming languages Node.js and Python with technologies like ASK, Atom, AWS Lambda, Azure Cloud and AWS services like S3 and CloudWatch. With quality Amazon Echo app development and design services, we try re-imaging customer experiences while enabling voice-driven computing.

Android Applications

Hello Google!

Google.Inc always captivates innovative solutions and technologies to make our life simpler. A new product launched by Google i.e “Google Nest” to make our life much more comfortable and easier - with Google Nest you can govern your daily routine activities through your voice. Now, you can play soothing music, watch the latest news (from trusted sources), listen to podcasts and many more. To use Google Nest, simply speak a command. At Amplework, we have an adroitness in delivering voice-driven computing solutions. Utilizing cutting-edge technologies, we develop data-driven solutions that meet the needs of our clients.

Independent Services to make you own voice assistant

Below is a list of technologies which we employ at Amplework Technologies to create amazing voice assistant apps.

Melissa

This is a new technology which provides users with the capability of adding or modifying a certain feature without having to change the algorithm fully. Melissa can perform tasks such as speaking, taking notes, reading news, playing music, among others. It is written in Python and can run on either windows, Linux or OS X.

Jasper

It is written in python and runs on its model B hence proving to be a great tool for Raspberry Pi fans. It is suitable for those developers who would like to create a customized AI assistant without much of external support.

Api.i

This technology covers a wide range of tasks and comes along with unique capabilities such as voice recognition and text-voice conversion. It also enables working in a private cloud and it comes in two versions namely; paid and free versions. In addition, it provides a wide range of API’s hence allowing creation of a personal assistant using available platforms and technologies.

Wit.ai

This technology makes it easier to create an assistant since it requires only intents and entities to set up the app. In addition, the intents are provided to the developer hence eliminating the huge task involved in creating them. It comes along with many API’s which makes it easier for the developers

How to build your own voice assistant?

If you intend to create your own Siri or Google assistant, make sure you

possess required skills and use

the following basic technologies.

Planning to start something new?

Amplework has been providing technical solution to various startups & enterprises for:

E-Commerce Development

ERP & CRM Development

Web Content Management System

Software Development

Mobile Apps Development

Cross Platform Apps Development

Trusted and Skilled Developer

Our developers are ready to join your team and build amazing mobile & web apps.