Demystifying Continuous Integration, Delivery, and Deployment

If someone wants to practice CI/CD, this might be a guide for the entire fresher. Traditional software engineering organizations split the tasks. Also, among the teams for proper development, construction, operations, and release, as well as adequate testing and quality assurance. This approach aims to increase work quantity by recruiting individuals in silos and adopting distinct roles and manual handoffs. Inputting human problems while working on improved software also helps a lot.

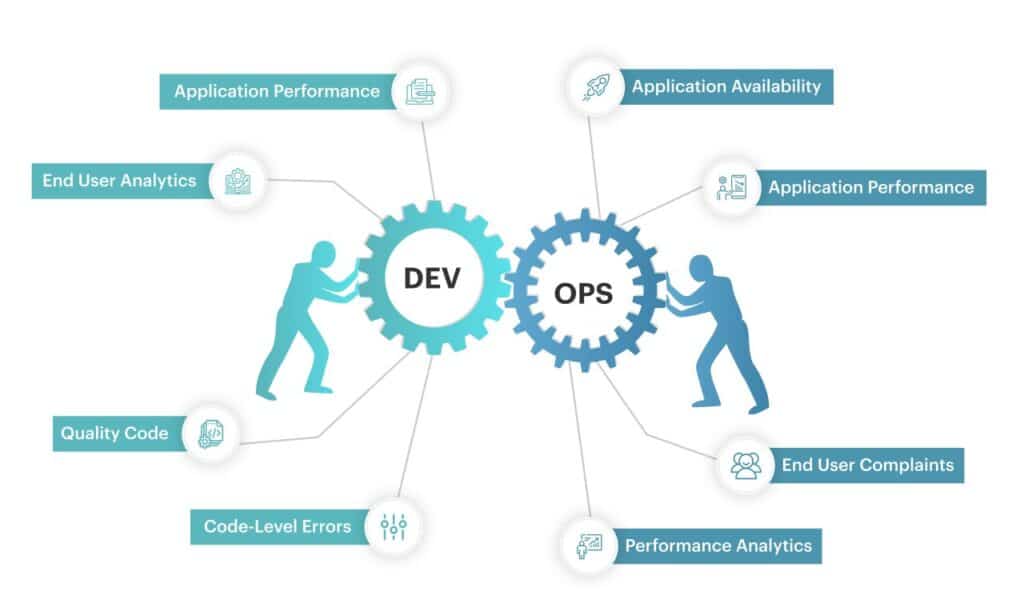

But the question arises of how DevOps shall help in solving the conventional issues. The problems that surround the scalability of human labor to accelerate the supply of complex software that is becoming increasingly complex for the client. Know details about what is CI/CD and what is DevOps?

What is CI/CD?

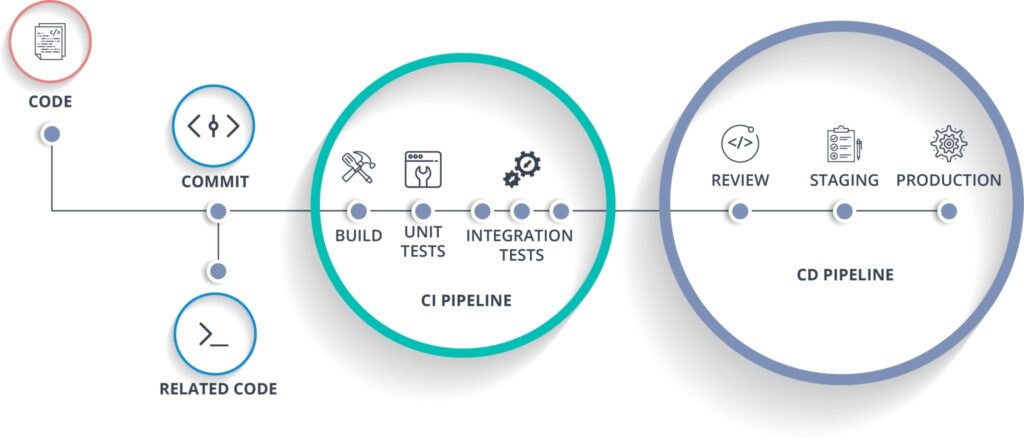

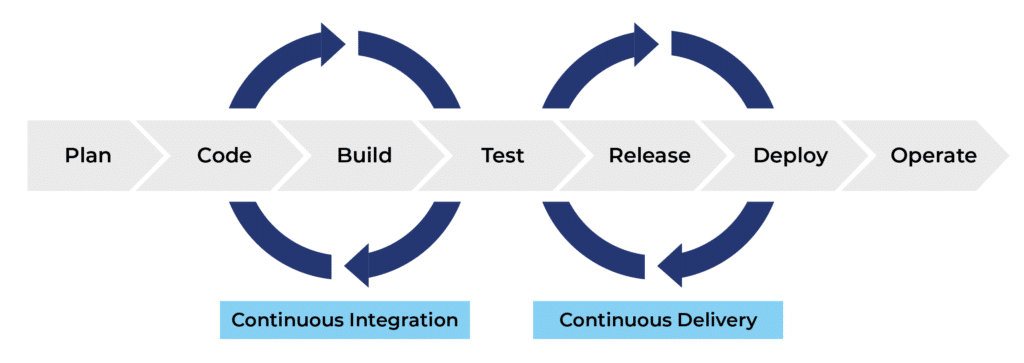

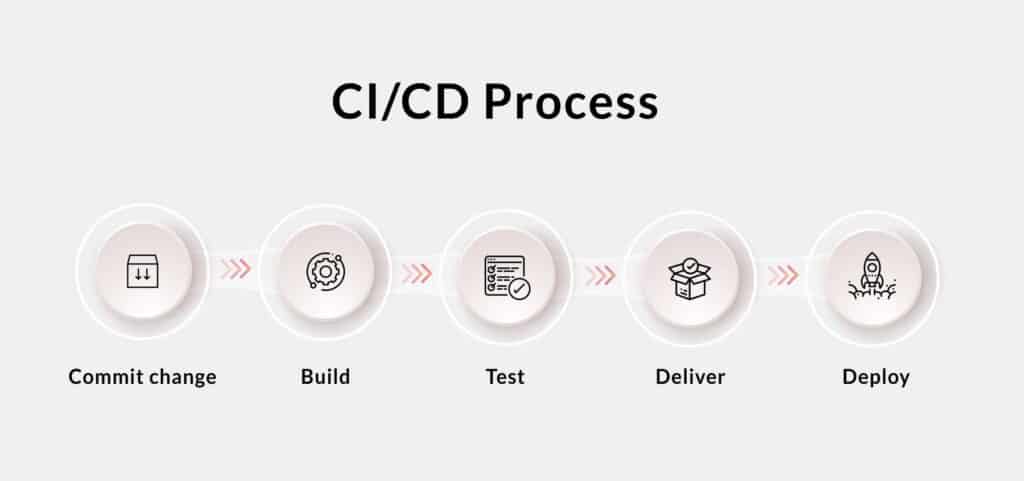

Continuous integration/continuous delivery is a collection of methods that enables software development teams to deploy code changes more often and consistently. DevOps includes CI/CD, which helps to reduce the software development lifecycle.

In its most basic form, CI is a contemporary software development technique in which incremental code changes are performed consistently and often. CI-triggered automated build-and-test stages ensure that code changes merged into the repository are trustworthy. As part of the CD process, the code is then provided swiftly and flawlessly.

What is DevOps?

DevOps is a word that refers to the entire spectrum of software engineering and the software development life cycle to lower friction while still giving customers valuable output. By automating the software development and release processes, DevOps seeks to address the problems that conventional engineering companies face. This approach achieves continuous integration, delivery, and development.

Software Delivery Pipeline

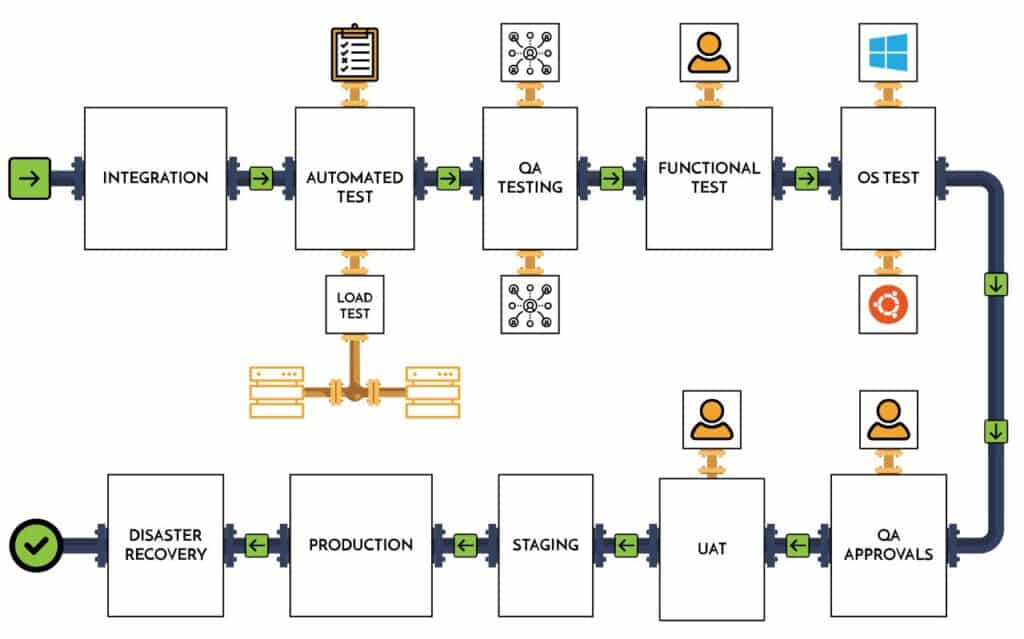

A flowchart can describe the route from a developer to a client. This flowchart shall bind together with the people and the system with a target to build and release the software. There are numerous software teams in a single organization. These teams can utilize their versatile processes and techniques, various tools, and also timelines to create effective software. As a result, running various software pipelines as well as automatically generated build and release facilities wouldn’t cause any problems.

The various steps included in the software delivery pipeline while seen from a high level:

Step 1. System Building

An IT organization is seldom observed striving continually to unite its development environment. They work only to make them regular and immutable for all the developers who are working tirelessly. Therefore, the organization needs to customize the practicing environment of its developers. This will help the organization build productive developers. The policies must also be justified to prevent developers from using them as an excuse to break the rules. DevOps should be aware that this is a topic that will be tackled in the future.

Step 2. Integration of environment

The different environments might suit the different developers. The result of production would also vary from one another despite uniqueness within the environment. Software engineering has a conventional answer and that is to adopt a system that must be:

- Documented and reproducible: it may be a reference or an example to understand the various deltas from the local government’s perspective.

- Maintained and consistent: The system must have the most recent facilities, and these facilities must be properly charged to ensure that the environment does not deviate from a better state and configuration.

- Official and Centralized: This system provides permission to the organization to manage and converge its system policies and methods.

Read More: The Ultimate Guide to CI/CD: 20 Must-Know Questions

Step 3. Software repository or reaching the destination

Here the built system is explored with the motive of expanding to incorporate an additional process for the organizations and also to add more organizational processes. But the built system is ignorant of the full life cycle of software development. These also require proper investment to customize and concentrate risk, dependencies, and complexity for an engineering institute. Also, as suggested in the previous statements, the build systems in the center can represent the conflict between the local and official developers. Optimizing a build system and reworking the life cycle of the software and mobile app development pipeline is the main objective of DevOps. This should be done to address all the organizational requirements and the need for suggesting another blog entry in the future. Let us now discuss continuous integration.

Aim of CI/CD pipeline

In the software business, the CI/CD pipeline refers to the automation that allows incremental code changes from developers’ workstations to be delivered quickly and reliably to production. Thus, the ultimate aim of CI/CD is to create various software artifacts, test them, and then deliver them in an application format. Then these artifacts shall be deployed in an automated way.

- The software repository shall be changed by only a committed and professional developer.

- There is a Continuous Integration (CI) involved. This system is responsible for regularly running the continuous integration process of building jobs that includes building and performing the regular integration testing on the built artifacts in isolation.

- Continuous Delivery: This involves passing Continuous Integration, also testing the artifacts that are to be promoted in an integrated environment for application integration testing and this would be tested as a whole application.

- Continuous Deployment: This indicates the passing of the application integration testing which also promotes and deploys the artifacts and that too in a destination environment.

Continuous Integration

The created system allows the company to make Continuous Integration (CI) automated, which is the current conventional means of developing the newest software version regularly, and all of this is dependent on the current state of the commits to the software repository. The continuous integration procedure is carried out to identify the cause of faults as soon as feasible. The reason behind this is that developers always must create buildable software. The engineering organizations always vary in their approach to CI because of the difference in their values and requirements.

The main factor determining Cl are:

- The period

- Static indicates that the CL period will be held every weekday, overnight, or multiple times a day.

- Ad Hoc, which represents the building up of Cl with every developer commitment?

There is multiple Repository Scope:

- There are certain branches in the software repository that will be built.

- Also, a subset of the software repository will be built.

- A subset of each platform shall also be built.

The organizations vary during the period and the repository scope variables for multiple reasons. The main reason is the urge and requirement to control the speed and to deliver the feedback or the results. The reason for this is that the CI is constructed across the entire collection of software repositories, platforms, and branches, all of which may take significantly longer to produce accurate results. The way or process used to fix the broken CI rules will become the next point of differentiation.

The Broken Builds

A broken CI builds governance will lead to the next level variables.

The problems that can arise are:

- Can the broken build lead to automated communication with the developer and team?

- The mediums that can be used by the automated content. Email, Chat, Message, Dashboard, and Information Radiators.

- The name of the policies that are used for the remediation of a broken CI build. To evaluate or judge the highest priority to fix and find out the outranking feature development and also discuss and evaluate the other emergencies.

- To determine whether the developer or the team is responsible to fix.

- How to communicate the remediation that is in process.

- To discuss and check if there are any expiration periods for the remediation before the source commit reverts while placing the CI process in a better state.

- To judge if any commits have been ignored given the long expiration process and whether that would result in a CI build failure.

- After the period and repository scope of CI are controlled, there arise many questions like the perfect way to fix a broken CI build. During the remediation period, the organization may face certain risks from newer sources of broken builds that come with all developers’ commitment. Thus, many organizations evolve while changing the period that is variable from a static value to an event-based definition.

Next Generation Continuous Integration

With the arrival of cloud computing that is provided on-demand scale, the organizations can reduce the period and at the same time increase the repository scope to achieve Continuous Integration thus ensuring proper coverage and speed as results. This helps in raising the confidence of the software repository and helps to produce buildable software.

Organizations can change the CI variables to:

Period: ad hoc which is equal to the CI build.

The Repository Scope and the increase in the coverage of the branches, repositories, and platforms for the building of equal CI builds.

Reversion is a broken CI Build that results in the reversion of the developer commit. Multiple organizations come forward to take the next step while incorporating the CI as a pre-commit check. While at the same time also blocking the candidate commit totally while a CI build fails.

Continuous Integration Testing

The faulty builds may be removed by CI, and the build artifacts must now be tested. This must be accomplished by updating to the new capability while deleting or uninstalling the previous functionality. As a result, minimum software quality is ensured. This is known as Continuous Integration Testing. This procedure asks for automated tests on freshly created artifacts. This stage is suitable to represent the state of the art, and it is so named because it can bring forward an increase in test scope and time to give findings.

The main areas of the tests include:

- The test system providers verify if the official build system runs proper tests within the built environment or if they perform complex testing requirements.

- The way the test systems or the test providers are held to the same standards and the build systems are to be documented.

- The way one develops and tests the systems all by themselves determines how one represents the complex software.

- To count the numbers of the new features that are to be tested and also count the number of existing features that are to be tested for regression.

- To determine the time that is to be taken to test the full set of features.

- To determine the way to optimize the functional unit testing about CI and outside of CI.

There occurs a trade-off between the exhaustive test scope and the time required to test. CI can achieve continuous integration testing results with proper speed by assuming the

optimization of the unit test scope. It is for the best of both sides that periodic exhaustive continuous integration testing is done. This allows further and better software quality and would also help in bringing down the barriers to automatic delivery.

Monolithic Application Refactoring

It is very natural to set up all the engineering teams in a row and then persuade them to deliver a single composite piece of software which is also a monolithic application.

But the challenges of the scale and coordination break down the monolith in due course of time and they evolve into an application architecture that is distributed. One has to understand the turmoil that engineering organizations go through when they take up the decision of refactoring their monolith. It breaks them into multiple components only because they are continuously decreasing the complexes of testing. Every component and at the same time the complexes of testing the whole application are increased. This is done to assemble all the components.

To support engineering agility, each team must have cutting-edge features and tests, and deployments must be performed separately since altering the monolith provides benefits. Until a CI is developing a monolith, the build artifacts would be focused on an isolated component of a larger application, at which point they would all need to collaborate. This would aid in expanding the scope and complexity of testing and deployment. This problem will be solved by DevOps automation, which will test the full application build artifacts at the same time.

Application Integration Testing and Continuous Delivery

The various challenges of microservices are named distributed application components and dependent systems. Here, to cover the full application, testing must be expanded. The regular integration testing only tests the built artifact in isolation and also some newer set of tests that would use the application as it is as a whole and this might also be called application integration testing.

According to the software delivery pipeline, integration is bursting at the seams and must be segregated from the rest. Though, indeed, frequent integration does not always necessitate any testing. However, all types of application integration testing may be incorporated for integrating the testing, which is also essential for regular delivery. The normal delivery method is the application’s direct deployment to an integration deployment for the application of integration testing. This also refers to the obvious target to cross the point into DevOps for many organizations. The entire application can be deployed and they shall be designed and played with the artifact that is lately built with the precious artifacts that are validated and this shall be done for software testing integration to carry forward.

Several new capabilities of the process

This process also follows several new capabilities termed variables: They are-

- Artifact Management and the set of artifacts that are necessary to form and shape the total application.

- The names of the existing and valid versions of each artifact can be reused with the artifacts made by the candidate.

- The ways to bring up a set of new resources and how all have the properly built and existing artifacts and how their work is configured together.

- To determine if the artifacts are accomplished while configuring the management or any other methods. Also, the ways to handle dynamic configuration management.

- The ways to ensure that the order of each resource/artifact dependencies is sufficient in bringing up the application. The backend services must be kept ready before the business tier logic can be started to connect and so on throughout the entire application.

- The functional unit tests work on a feature on a build artifact while remaining in isolation to determine whether the application features on the whole system can be retested.

- To judge whether the integration testing needs proper performance, scale, and security testing to continue and function.

Analysis of the above

If an organization can answer the above problems, then it can also create an ephemeral test environment for all the applications. The integration testing would then be performed on the deployed build artifacts. We can also optimize the application integration into tests and these perform a small set having critical features and transactions. This could be done throughout the application life cycle. This could help it to consume the lesser time possible for the next generation ad hoc CI process.

In this process, the DevOps approach can help to activate the next generation’s continuous integration. The broken builds can be eliminated through this process. A minimum set of software quality and deployability can also be achieved with the process of automated application integration testing. The true and just examples of the blending of Development and Operation are Application orchestration and deployability.

There is much scope for performance, security, and longevity in testing and this testing only works on application integration testing. They are sometimes also eliminated, or other strong regions are presented in automation.

Regular and processed delivery also indicates the operation of a fully orchestrated application. The continuous deployment will thereafter be performed using this technique regularly.

Continuous Delivery and Continuous Deployment

Let us now understand the difference between Continuous Delivery and Continuous Deployment. Normally, there is very little scope for the test and destination.

An example of continuous delivery to a teat application is application integration. Since no test can pass through the tear environments, the entire test environment must be seen as transient and ephemeral. The procedure may be repeated when a build passes the optimized application integration test. The application deployment for longer life and the manual and long-term test would now run and this would lead to a continuous process of delivery to a test application. This also constitutes the environment for the QA/test teams.

The test environments that live longer represent challenges in the organization. This could be decided by looking at the need to perform tests. These tests could preserve the reproducible bugs and hand them off to the teams that would in the future consider and perform the manual tests that are required.

As a result, the preceding depicts the friction in automation that might prohibit or obstruct ephemeral and continuous supply. In a nutshell, this is another call for DevOps evangelism!

After passing application integration testing, one may aspire for staging, which could be accomplished by continuous development and production.

Read More: Test Automation For Improved Efficiency & Reliability

Final Verdict

All IT organizations must aim at determining the opportunity and the term of the application integration testing which is time taking and tiresome. Although this testing is a part of the periodic integration model, it is also a simple and practical technique to fulfill the notion of a DevOps-style value delivery from the developer to the client. You can also contact Amplework Software Pvt Ltd for the same

sales@amplework.com

sales@amplework.com

(+91) 9636-962-228

(+91) 9636-962-228