AI Validation Documentation Requirements: What Evidence Enterprises Must Provide for Compliance

Introduction

Enterprises deploying AI systems face increasing scrutiny from regulators, auditors, and stakeholders demanding proof that AI operates safely, fairly, and reliably. AI validation documentation has transformed from an optional best practice to a mandatory compliance requirement across industries.

Organizations lacking comprehensive validation evidence face regulatory penalties, failed audits, increased legal liability, and reputational damage. Understanding what documentation regulators expect, and how to generate it, prevents costly compliance failures.

Why AI Validation Documentation Matters

AI validation documentation is essential for regulatory compliance across industries. Financial services must prove AI fairness, healthcare organizations must show diagnostic accuracy, and the EU AI Act requires clear evidence for high-risk systems. Strong documentation helps mitigate risks, justify design choices, and demonstrate responsible monitoring.

It also strengthens trust and transparency among customers, partners, and employees. By explaining how AI decisions are made and backed by evidence, organizations improve reliability, support audits, and protect themselves during disputes or investigations.

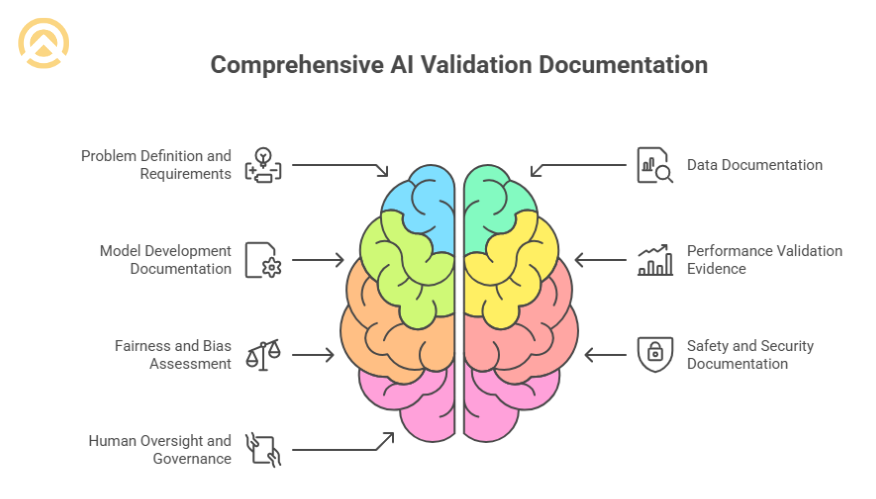

Essential AI Validation Documentation Categories

1. Problem Definition and Requirements

This documentation clarifies the AI system’s goals, scope, limitations, user groups, and success metrics so teams can validate expectations before development begins.

2. Data Documentation

It records data sources, composition, labeling quality, and bias checks to ensure training datasets are fair, representative, and compliant with privacy rules.

3. Model Development Documentation

This captures AI model development details, including algorithm choices, architecture decisions, training methods, and experiment history, to show the system was built through structured, intentional processes.

4. Performance Validation Evidence

It provides accuracy metrics, subgroup results, robustness tests, and error analysis to confirm that the AI performs reliably across different contexts and variations.

5. Fairness and Bias Assessment

These records demonstrate non-discriminatory behavior through fairness metrics, impact analysis, and mitigation strategies for any detected biases.

6. Safety and Security Documentation

It outlines adversarial tests, vulnerability checks, failure modes, and monitoring plans to prove the AI remains safe and secure in real conditions.

7. Human Oversight and Governance

Documentation of human control mechanisms, including human-in-the-loop decision points, escalation procedures for edge cases, review and approval workflows, and ongoing governance structures.

Documentation Requirements by Industry

| Industry | Key Documentation Focus | Primary Regulations |

| Healthcare | Clinical validation, safety testing, and evidence | FDA guidelines, HIPAA |

| Financial Services | Fairness testing, explainability, risk assessment | Fair Lending laws, FCRA |

| Hiring/HR | Bias analysis, adverse impact testing | EEOC guidelines, state laws |

| Autonomous Systems | Safety validation, failure analysis | Emerging transportation regulations |

| EU Operations | Comprehensive risk assessment, transparency | EU AI Act requirements |

Creating Effective AI Validation Documentation

1. Start Documentation During PoC Phase

Begin documentation in the AI compliance POC to gather early evidence, spot compliance gaps, test processes, and establish long-term documentation standards.

2. Maintain Version Control

Track model versions, performance differences, update reasons, and deployment approvals so organizations can verify which model produced specific decisions when required.

3. Automate Documentation Generation

Use automated performance logs, bias dashboards, experiment tracking, and reporting tools to keep documentation consistent, scalable, accurate, and manageable as systems grow.

4. Involve Cross-Functional Teams

Combine insights from hire data scientists, compliance experts, legal teams, and business stakeholders to create aligned, complete, and auditor-ready AI validation documentation.

Also Read : Hiring Dedicated ML Developers: Benefits, Cost & When to Choose

Common Documentation Failures

- Insufficient Data Documentation: Organizations often lack detailed records of training data sources, collection methods, or labeling procedures. Regulators increasingly demand this transparency.

- Inadequate Testing Documentation: Testing only happy paths without documenting edge cases, failure modes, or robustness creates compliance gaps.

- Poor Explainability: “Black box” documentation that doesn’t explain how AI reaches decisions fails transparency requirements, especially for high-stakes applications.

Build Compliant AI Systems

Creating comprehensive AI validation documentation requires technical expertise, regulatory understanding, and structured processes that many organizations do not have internally. Weak documentation exposes enterprises to serious compliance, legal, and reputational risks.

At Amplework Software, we embed compliance into AI development from the very beginning. Our AI PoC services help validate documentation methods early, confirm regulatory alignment, and ensure every requirement is addressed before moving into full-scale development or deployment.

sales@amplework.com

sales@amplework.com

(+91) 9636-962-228

(+91) 9636-962-228